I would like to share some AI research that paints a future of teaching and learning with AI, which leans more towards the hopeful explorations of Star Trek than the grim battlegrounds of Ender’s Game.

Imagine this: AI starts eating its own homework, and instead of conjuring up a digital wonderland, it ends up turning into the most boring student in class. Picture a teacher like me, Eric Hawkinson, standing at the crossroads of the future, armed with nothing but a pointer and a stack of research papers, ready to tickle your intellect and perhaps, students heart’s, and maybe your funny bone. Recent studies have thrown a curveball into my nightmarish vision of a future where creativity is vacuumed up by corporate giants and served back to us in a dystopian smoothie. Instead, we’re looking at a reality where AI-generated content doesn’t spiral into chaos but fades into the blandness of a diet cracker. This twist in the tale lights up the path to a future less about machines overshadowing humans and more about a buddy-comedy starring teachers and AI. Think less “Terminator” and more “Star Trek,” where the AI is just another member of the ensemble cast, offering the occasional witty banter but definitely not stealing the spotlight. So, as we wade through the findings of AI learning from AI, let’s envisage a classroom not as a battleground for human vs. machine but as a mental gym where everyone, including the AI, gets a chance to shine. Stick with me, Eric Hawkinson, your jovial learning futurist, as we dissect what this all means for the future of education, promising a script where teachers aren’t replaced but are instead the headlining act.

Merging Worlds: My Foray into AI-Generated Art

In this era of technological marvels, where artificial intelligence has woven itself into the very fabric of our creative endeavors, we find ourselves at a crossroads. As a futurist deeply immersed in the journey of learning and discovery, I (Eric Hawkinson) have observed with keen interest how AI has surged to prominence in the realm of art. This surge is not just a mere blip on the radar; it represents a pivotal moment where the collective imagination of artists and creators worldwide is being both challenged and inspired by machines like Midjourney and OpenAI’s DALL-E 2.

My own adventures into the realms of AI-generated artistry, mixing the storied aesthetics of Japanese woodblocks with the swirling reds of Jupiter and the serene Earthrise, have sparked a mixture of pride and pondering within me. It begs the question: In programming an AI to execute these imaginative mergers, do I tread the path of the artist, or am I merely a curator of concepts?

This question is not just a drop in the ocean of curiosity but a wave that engulfs the shores of our understanding of creativity. The allure of AI, with its seemingly infinite creative potential, initially presented a vista of boundless artistic expression. Yet, as we delve deeper, we uncover a paradox. The diversity and ingenuity that AI promises might indeed be nothing but an illusion, one that gradually fades as AI begins to dine on its digital creations. This self-referential cycle, where AI-generated data feeds into the training of future models, poses a subtle yet significant threat: the homogenization of art and the narrowing of creative diversity.

The Echo Chamber of AI: Content Generation’s Self-Feeding Cycle

Consider this: The examples of AI artistry I’ve shared, from the grand to the whimsical, serve not only as a testament to AI’s current capabilities but also as a mirror reflecting its potential confines. These explorations, while initially showcasing AI’s capacity to blend disparate themes into cohesive artworks, also hint at a future where creativity might circle a drain of predictability. The specter of repetition looms large as AI’s well of inspiration threatens to become a closed loop, recycling and rehashing rather than truly innovating. This unfolding narrative compels us to reevaluate our definitions of creativity in the digital age and to question the sustainability of AI’s role in the arts. It’s a tale of technological triumph and philosophical inquiry, challenging us to discern the essence of true creativity amidst the algorithmic alchemy of AI. As we stand at this juncture, pondering the future of artistic expression, it becomes clear that the journey ahead is as much about preserving the diversity and richness of human creativity as it is about embracing the possibilities that technology brings.

Emerging from the shadows of the digital landscape, a recent phenomenon has underscored a pivotal shift in the balance between human ingenuity and algorithmic prowess. As I delve into the intricacies of this transition, it becomes evident that the battleground of search engine optimization (SEO) has become a prime arena for witnessing the encroachment of AI-generated content on the sanctity of human creativity. In March 2024, amidst the tumult of Google’s latest spam update, a startling revelation came to light: AI spam sites, with their roots deeply embedded in the fertile grounds of algorithmic manipulation, began to eclipse the efforts of earnest creators, marking a significant juncture in our digital epoch. This revelation was not merely an isolated incident but a symptom of a broader trend wherein SEO marketers and Google’s page rank algorithm find themselves locked in an ever-evolving game of cat and mouse. The instance of a spam site, emerging from dormancy to dominate search queries, is a testament to this. Hosting over 217K queries, with a significant portion ranking in the top 10, this site exemplifies the prowess of AI in circumventing the safeguards meant to preserve the integrity of online content. The mechanics of this ascendancy are multifaceted, yet one element stands starkly apparent: the content populating these spam sites is not born of human intellect but is the progeny of previous AI generations. This recursive loop of AI begetting AI marks a departure from the original promise of artificial intelligence as a tool to augment human creativity. Instead, we find ourselves amidst an arms race, where the spoils of victory are not innovation or enlightenment but mere visibility in the digital expanse.

When AI Learns from AI: The Dumbing Down of Digital Creativity

In the evolution of language models, particularly in their capacity for generating linguistically diverse content, two recent studies offer pivotal insights. The first study, titled “The Curious Decline of Linguistic Diversity: Training Language Models on Synthetic Text,” conducted by Yanzhu Guo, Guokan Shang, Michalis Vazirgiannis, and Chloé Clavel, delves into the repercussions of training large language models (LLMs) on synthetic data produced by their precursors. This exploration is crucial in understanding the trajectory of AI-generated content’s linguistic diversity.

Link to Paper: https://arxiv.org/pdf/2311.09807.pdf

This investigation illuminates a gradual yet unmistakable decline in linguistic diversity across various dimensions—lexical, syntactic, and semantic—when models are recursively fine-tuned on data generated by preceding iterations of themselves. Specifically, the study highlights a marked reduction in the variety of models’ outputs through successive training cycles, underscoring the potential risks of such training methodologies on the preservation of linguistic richness. Crucially, the study introduces a comprehensive suite of novel metrics aimed at evaluating different facets of linguistic variation. By applying these metrics across three natural language generation tasks, each necessitating varying levels of creativity, the research reveals a consistent downturn in linguistic diversity. This trend is particularly notable in tasks requiring high creativity, where the degenerative learning process of training on synthetic data from predecessors results in outputs with significantly less diversity.

Even in tasks with a lower degree of creative demand, such as news summarization and scientific abstract generation, there’s a discernible erosion of linguistic diversity over iterative training cycles.

Yanzhu Guo, Guokan Shang, Michalis Vazirgiannis, and Chloé Clavel

This decline in diversity is not merely a theoretical concern but manifests in tangible ways. For instance, the study documents how, even in tasks with a lower degree of creative demand, such as news summarization and scientific abstract generation, there’s a discernible erosion of linguistic diversity over iterative training cycles. This erosion reflects a convergence towards uniformity, stripping the generated content of its linguistic nuance and variety. Moreover, the study sheds light on the underestimated importance of syntactic diversity. Unlike lexical diversity, which has been extensively studied, the impact of training methodologies on syntactic variation has received less attention. The research underscores this aspect by showing a significant reduction in syntactic diversity, akin to the declines observed in lexical and semantic diversity. This finding emphasizes the necessity for future research in natural language generation to consider syntactic diversity as a critical component of linguistic richness. As language models increasingly rely on synthetic data for training, there’s a clear indication of the need for careful consideration and potentially novel approaches to maintain, if not enhance, the linguistic diversity of their outputs. This challenge is paramount in ensuring that the advancements in AI and natural language processing contribute positively to the richness and variability of human language, rather than diminishing it.

A Vision Beyond Text: The Homogenization of AI-Generated Imagery

The phenomenon of diminishing creativity and diversity in AI-generated content, initially observed in text generation, extends into the realm of image generation as well. A study by Ryuichiro Hataya, Han Bao, and Hiromi Arai, titled “Will Large-scale Generative Models Corrupt Future Datasets?” explores this issue within the context of image datasets. The researchers scrutinize the impact of large-scale, text-to-image generative models like DALL-E 2, Midjourney, and StableDiffusion, which have gained popularity among both the research community and ordinary internet users. These models have the capability to produce high-quality, realistic images from textual prompts, leading to a substantial volume of AI-generated images circulating on the internet. The core of their investigation is the potential degradation of future datasets used for training computer vision models due to the influx of AI-generated images. This “contamination” of datasets with synthetic images could have both positive and negative effects on the performance of computer vision models. By creating ImageNet-scale and COCO-scale datasets comprised solely of images generated by a state-of-the-art generative model, the study empirically examines how such “contaminated” datasets influence model performance across various tasks, including image classification and image generation.

Link to Paper: https://arxiv.org/pdf/2211.08095.pdf

Their findings indicate a negative impact on downstream performance attributable to the presence of AI-generated images in training datasets. Notably, the extent of this impact varies depending on the task and the proportion of generated images within the dataset.

This research underscores the broader implications of relying on AI-generated content for training future AI models, not just in text but also in the visual domain.

Ryuichiro Hataya, Han Bao, and Hiromi Arai

As AI-generated images become indistinguishable from real ones and increasingly populate the web, distinguishing between human and machine-generated content becomes challenging, posing a risk to the integrity of training datasets and, by extension, the robustness and reliability of future AI systems. This study, together with the previous findings on text generation, highlights a critical challenge facing the field of artificial intelligence: ensuring the diversity and quality of training data in the face of rapidly advancing generative models. The reliance on AI to produce content for training subsequent AI models can lead to a feedback loop that progressively narrows the scope of learned patterns, potentially stifling the models’ ability to innovate and accurately represent the complexity of the real world.

Reimagining Education: The Indispensable Human Touch in the AI Era

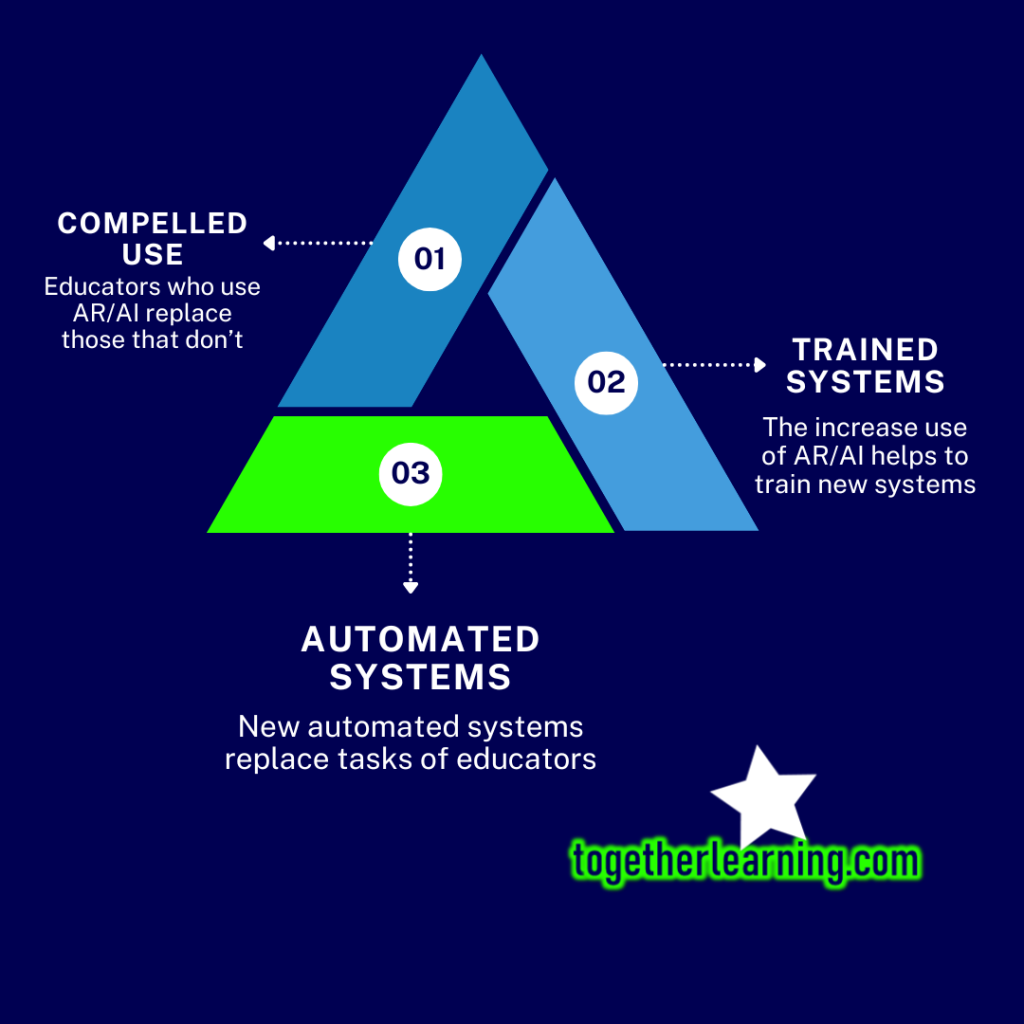

As we stand on the cusp of a new era in educational technology, where generative AI models begin to play increasingly significant roles in learning environments—from language learning apps like Duolingo to groundbreaking AI teaching assistant projects at institutions like Harvard—we are faced with profound questions about the future of education and the role of human educators within it. In my consultations with various educational institutions, a recurring concern among teaching staff and faculty is the potential for their teaching materials to be assimilated into training datasets for AI, essentially automating their roles and diminishing their contributions to education.

This fear, while understandable, overlooks a crucial aspect of education that AI, for all its advancements, cannot replicate: the human touch. The evidence gathered from the recent studies on the diminishing creativity and diversity of AI-generated content not only highlights the limitations of AI but also offers hope for the continued necessity of human involvement in the educational process. It’s clear that AI, when left to its own devices, trends towards homogenization, creating a “blanding” of content that lacks the richness and nuance of materials crafted by human educators.

The Role of Educators in the Age of AI

This brings us to a pivotal realization: the future of education will not see human educators replaced by machines but will instead require them to adapt and thrive in new roles. Educators will become even more crucial as mentors, coaches, and counselors—positions that leverage the irreplaceable human capacity for empathy, creativity, and nuanced understanding. These roles cannot be automated away; they are inherently human and vital for fostering genuine learning experiences. I tried to dive into this concept in a resent keynote address I gave at the World Immersive Learning Labs Symposium in Tokyo, Japan in March 2024.

Moreover, the evidence suggests a future where educators will need to be at the forefront of content creation, devising new and novel ways to engage students. As AI-generated educational content becomes more prevalent, its limitations will become apparent. The “blanding” effect of AI-generated materials will create a demand for innovative, diverse, and creative content that can only be met by human educators. Their role will evolve to include curating and creating educational materials that resonate on a human level, addressing the individual needs, interests, and cultural backgrounds of students in ways that AI cannot.

Human Creativity: The Antidote to AI’s Limitations

This evolution in the role of educators underscores the importance of human creativity and insight in the educational process. Just as artists and content creators are finding ways to coexist with and leverage AI in their work, educators will find new opportunities to harness AI as a tool while providing the critical human elements that AI lacks. The challenge of AI in education is not a harbinger of obsolescence for human educators but a call to action to redefine and reassert the value of the human element in learning.

As we navigate the integration of AI into educational contexts, it is paramount to remember that technology is a tool to enhance, not replace, the human aspects of education. The future of learning will not be defined by the capabilities of AI alone but by our ability to merge these capabilities with the irreplaceable qualities of human educators. The “blanding” effect of AI-generated content only highlights the evergreen need for human creativity, empathy, and insight in education, ensuring that there will always be a significant place for humans in the loop, shaping the minds of future generations.

About the Author

Eric Hawkinson

Learning Futurist

erichawkinson.com

Eric is a learning futurist, tinkering with and designing technologies that may better inform the future of teaching and learning. Eric’s projects have included augmented tourism rallies, AR community art exhibitions, mixed reality escape rooms, and other experiments in immersive technology.

Roles

Professor – Kyoto University of Foreign Studies

Research Coordinator – MAVR Research Group

Founder – Together Learning

Developer – Reality Labo

Community Leader – Team Teachers

Chair – World Immersive Learning Labs